The High-Energy Group at Mac

High energy physics is a small but growing area at McMaster, building on strong links to nearby institutions in the area like Perimeter Institute. Click HERE to meet the team, and HERE to see what kind of background you might need to join it.

High-Energy Physics at the Crossroads

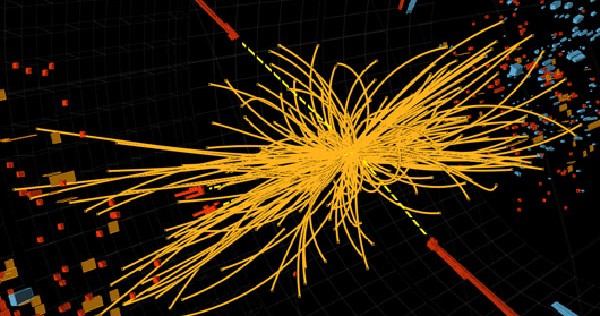

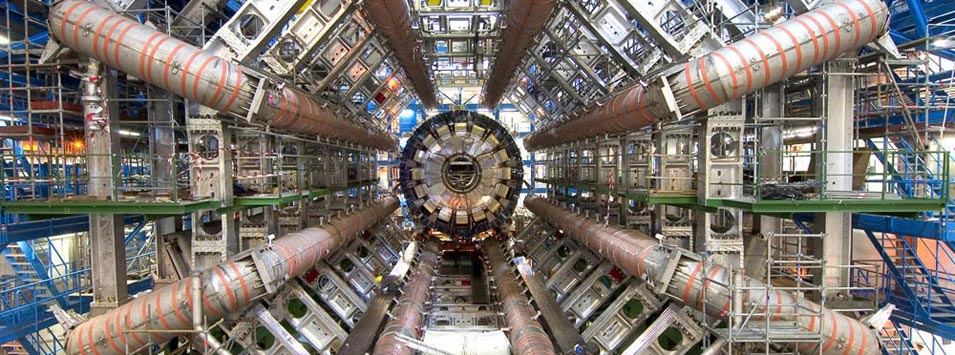

This is a very exciting time to be working on Particle Physics, since we sit at the threshold of new discoveries likely to be driven by experiments at the Large Hadron Collider. (The first of these new discoveries is recently in: the Higgs boson.)

The Scientific Context: Particle Physics

The scientific mandate of subatomic physics is to identify the elementary constituents of matter and their physical properties; identify the fundamental interactions through which they interact; and to identify how these ingredients combine to produce the organization we see around us in Nature. Unusually, we stand on the threshold of a complete rethinking of our answer to these questions, which will force us to discard the Standard Model — the theory that embodies the 40-year-old consensus as to what these basic constituents and forces are.

Four centuries of study reveal that Nature comes to us in an enormous hierarchy of scales, ranging from elementary particles at the smallest sizes up to the observable universe as a whole on the largest distances. What ultimately makes the study of Nature possible at all is the remarkable fact that we don’t need to understand all of these scales at once; an understanding of the flow of traffic doesn’t require detailed knowledge about the engines that propel the vehicles involved. Similarly, an understanding of atoms does not depend on a detailed knowledge of nuclei, and so on.

Yet although the properties of Nature at any one scale are largely independent of most of the details of more microscopic physics, some of these details do turn out to be important for our explanation of the properties of larger systems. The properties of car engines do constrain the average speeds that are possible for traffic flows. Similarly, some chemical and thermal properties of matter depend on the size of atoms; atomic sizes depend on the properties — mass and couplings — of the constituent electrons and nuclei, and so on. For this reason the properties of matter on the smallest scales play a fundamental role in science; they underpin many of the explanations of why larger things behave the way they do.

Subatomic physics represents the cutting edge of our knowledge of physics on the smallest scales to which we have access. The context for present-day research starts with a summary of what has been the paradigm up until now, together with the reasons why this is believed now to be inadequate.

What are the constituents of matter?

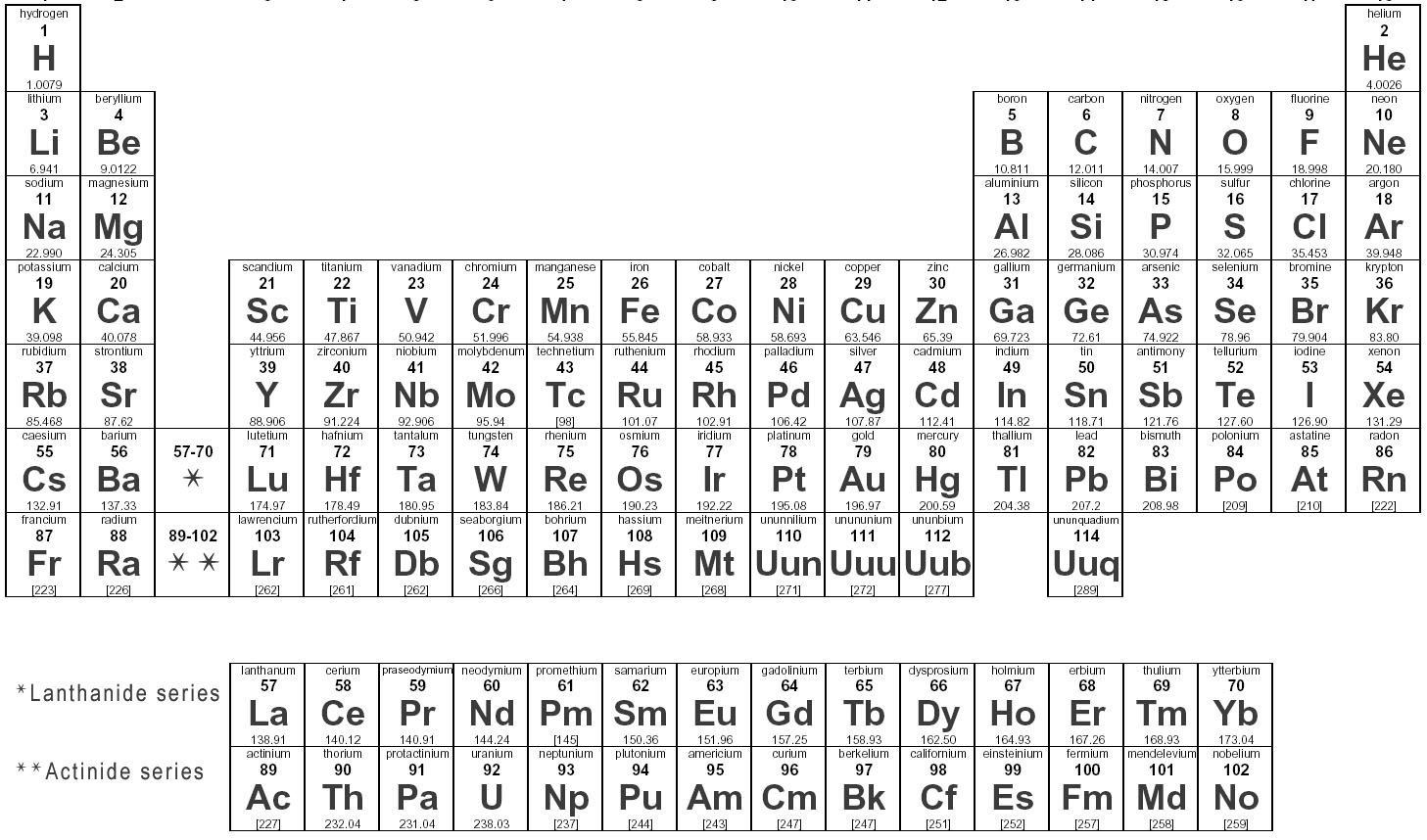

The twentieth century saw enormous progress in identifying the fundamental constituents of matter. In the early 1900s these were thought to consist of several dozen types of atoms, together with some oddities like the then-recently-discovered electron and products of radioactive decay. The discovery of quantum mechanics and the nucleus then allowed the many properties of atoms to be inferred from those of nuclei and electrons. The recognition that nuclei are themselves built from smaller things eventually led to a much more economical list of fundamental objects: protons, neutrons and electrons.

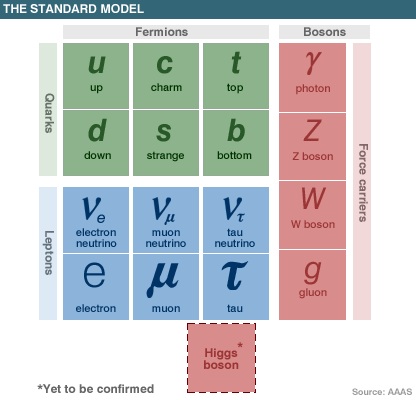

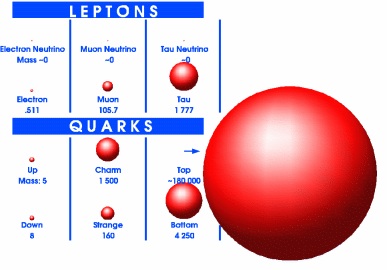

New particles — muons, pions and a horde of other new particles — continued to be discovered, initially through studies of the cosmic rays that continuously bombard our atmosphere from space. This temporarily led to a much more complicated picture, whose underlying simplicity did not emerge until the 1960s when many of the particles known by that point were themselves found to be made up of still smaller constituents. What then emerged as elementary particles remain so now: six species of `quarks’ (up, down, strange, charm, bottom and top), and six species of `leptons’ (electron, muon, tau and three species of neutrinos).

The resulting list of particles is strangely redundant. Essentially all of everyday matter is made only of electrons and up and down quarks (of which the last two make up the proton and neutron), which, together with a neutrino, make up what is called the `first generation’ of elementary particles. Remarkably Nature seems to come to us with two more `generations’ of particles, whose properties directly copy this first generation (i.e. the charm and top quarks resemble the up quark; the strange and bottom quarks resemble the down quark; the muon and tau are copies of the electron; and so on).

How do the constituents interact?

Progress has also been made identifying the interactions through which these particles interact. Four interactions are now recognized as fundamental, of which only two — gravity and electromagnetism — had been identified in the 19th century. The other two — the strong and weak forces — emerged later as the interactions responsible for binding quarks into protons and neutrons (and these into nuclei), and for some of their radioactive decays. In the modern description each of these forces is associated with a field, whose quanta — gravitons, photons, gluons and W and Z bosons — can also be produced in reactions much like any other elementary particles.

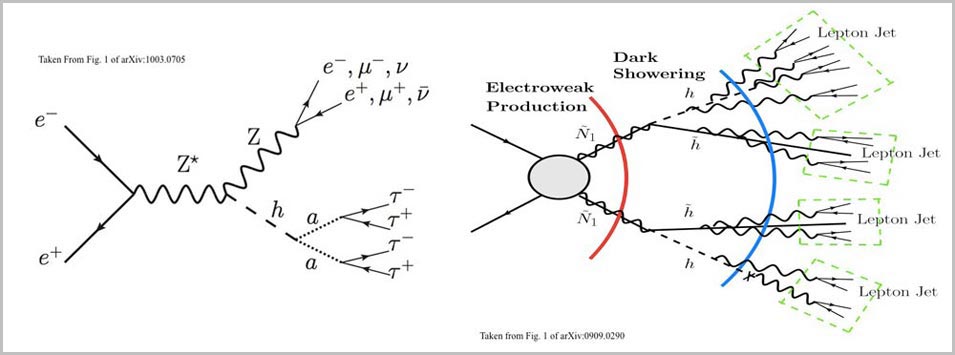

The 1970s saw a great synthesis of these constituents and interactions into a very successful theory — the Standard Model — which survives (slightly battered) in our own day as the best theoretical benchmark we have. The Standard Model describes in detail how the fundamental quarks and leptons interact through three of the four forces: the weak, strong and electromagnetic interactions. It also postulates one hitherto undiscovered particle — the Higgs boson — whose presence (or the presence of something similar) is required by the theory’s mathematical consistency.

One of the Standard Model’s great successes is the way it naturally explains the many phenomenological patterns that had been inferred from observation in numerous experiments over the years. In particular it accounts for and explains several exact and approximate conservation laws that appear to work very well in practice. Among these are the approximate conservation of `parity’ by only three of the four interactions; the approximate conservation of CP (parity together with the interchange of particles with antiparticles); the conservation of baryon number, B (inferred from the absence of proton decay); and the separate conservation of the number of each generation of lepton, Le, Lµ and Lτ (inferred from the absence of processes like µ to e γ, wherein a muon decays into an electron and a photon).

Although the Standard Model provides an impressive synthesis of three of the interactions, one force — gravity — remains beyond the pale. Although well-described over astrophysical distances by Einstein’s General Relativity, well-established theories do a poor job of describing gravity over very short distances where quantum effects become important.

How are they organized?

The discoveries leading to the Standard Model provide a precise snapshot of the constituents of matter and their interactions down to distance scales that are just beginning to be surpassed now. Although this represents a major triumph, it is only the first step towards understanding the properties of systems involving two or more particles.

Experience shows us that systems with many particles often aggregate into complicated states that exhibit a broad diversity and richness of properties. Typically it is particles that interact through the strongest forces that organize themselves into bound states on the smallest scales. Quarks and gluons (who couple to the strong force) typically bind into nuclear matter that can take on any of a myriad of forms — nuclei, protons or neutrons, a quark-gluon plasma — depending on the pressures and temperatures involved. Larger systems built from these — such as atoms and molecules — are usually bound through the next-strongest interaction: electromagnetism. The weakest long-range force — gravity — is the main player in the largest of objects, like planets, stars and galaxies.

The study of how these different kinds of matter behave is of much interest in its own right, and is an important part of our confidence in the success of the Standard Model in describing experiments (apart from a few recent examples, see below). The study of matter in extreme environments — like exotic nuclei, inside neutron stars and stellar explosions, or the very early universe — is of ongoing interest for a variety of reasons. These include understanding qualitatively new forms of matter that can arise at extreme energies and densities; the past and current evolution of the universe as a whole; the role of extreme environments in the origins of the elements we find today in Nature; and so on.

Why do we think the present paradigm must fail?

Despite the great success the Standard Model has enjoyed when tested over the decades since its discovery, recent years have revealed signs of incipient failure. In particular, these new-found flaws indicate that it is very likely to fail at the distances that are just now becoming accessible at the highest-energy accelerators. The following paragraphs summarize the evidence that the Standard Model is now failing. Subsequent sections describe in more detail the role they play in guiding the ongoing research program worldwide.

Neutrino Oscillations

To date perhaps the clearest evidence for the Standard Model’s failure is the discovery of neutrino oscillations. Decades of effort culminated in the 1990s to show that reactions are possible that can turn leptons of one generation into leptons of another generation. Evidence for this is seen from neutrinos produced deep within the Sun and from neutrinos produced by cosmic rays in the upper atmosphere on Earth, followed later by experiments at accelerators. In essence, these observations imply that Le, Lµ and Lτ are not separately conserved, most likely due to the presence of a nonzero neutrino mass. This requires moving beyond the Standard Model, and may have enormous implications for cosmology (the study of the evolution of the universe as a whole) and astrophysics.

Dark Matter and Dark Energy

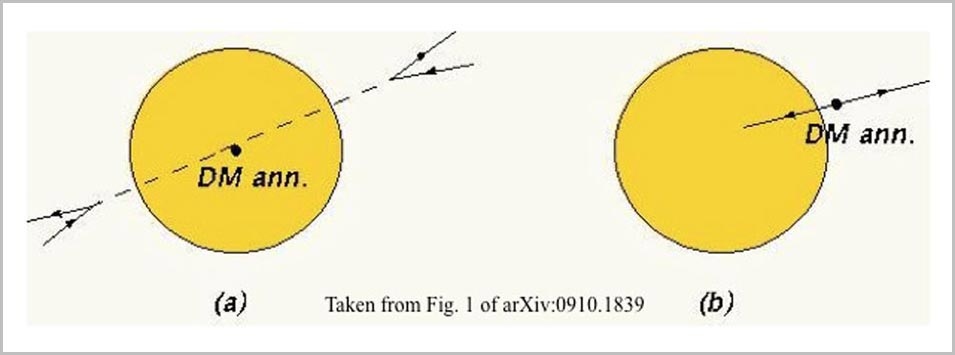

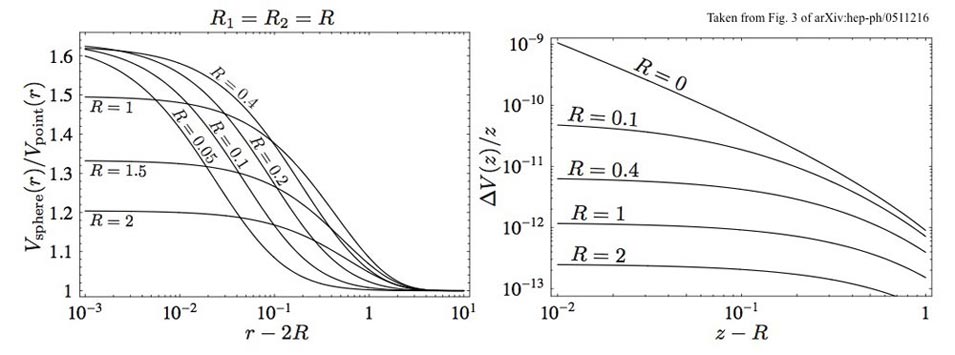

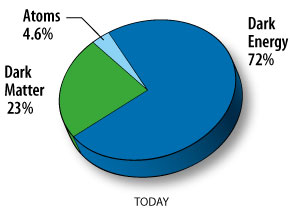

Cosmology provides a second line of evidence that the Standard Model cannot be a complete description of Nature. Although the Standard Model underpins the Hot Big Bang model, which provides a very successful description of cosmological observations, observations over recent years show that the Big Bang model only works if the universe is filled with two different kinds of hitherto-unknown matter. Modern surveys show that ordinary matter — i.e. that described by the Standard Model — can make up at most about 5% of the total energy density in the universe. (Although neutrinos and photons are currently the most abundant ordinary particles in the universe, it is ordinary atoms — mostly Hydrogen — that dominate in the energy density because they are relatively heavy.) The rest consists of Dark Matter (about 25%) and Dark Energy (around 70%). Neither of these can be accommodated within the framework of the Standard Model together with general relativity, and so provide important evidence that something is missing. At present it is not known whether this involves some new kind of particle or new interaction.

Quantum gravity

A third denunciation of the completeness of our current understanding comes from the awkward coexistence between the Standard Model (describing the electromagnetic, strong and weak forces) and general relativity (describing gravity). Although experiments are not yet available that can probe gravity over the small distances for which quantum gravity is important, 50 years of theoretical research have proven it to be notoriously difficult to come up with any theoretical framework at all that can combine gravity with quantum mechanics in a sensible way.

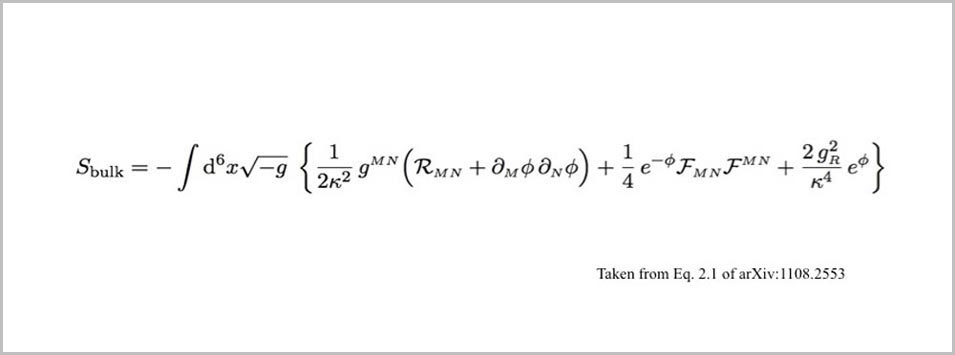

Very few theories have emerged over the years that can claim to have done so, and the best developed of these is string theory. String theory proposes that the elementary constituents of Nature are not particles at all, but rather strings: fundamental objects having a nonzero length (but zero width). Although the jury remains out as to whether or not string theory describes Nature in any way, its tight mathematical structure has provided surprising insights into quantum field theory: the mathematics that is the language in terms of which our description of Nature is cast.

Why is the Standard Model the way it is?

A final indication that the Standard Model is unlikely to be the last word comes from the unusual pattern of masses and couplings that it assigns to the various quarks and leptons. These come, in particular, with a dizzyingly complicated pattern of masses and interactions that are described by parameters called mixing angles. Why should the pattern of masses be so complicated? Why should there be three identical generations? Although these are well-described by the Standard Model, they are not explained by it. The present situation resembles the 19th understanding of the properties of atoms, for which the periodic table summarized many of the systematics revealed by chemical experiments. But the explanation for these properties did not come until the atoms were recognized to be built from nuclei and electrons, allowing the prediction of the properties the periodic table summarized. Similarly, we now have no real explanation for the values of the masses and couplings that describe the properties of the known quarks and leptons.

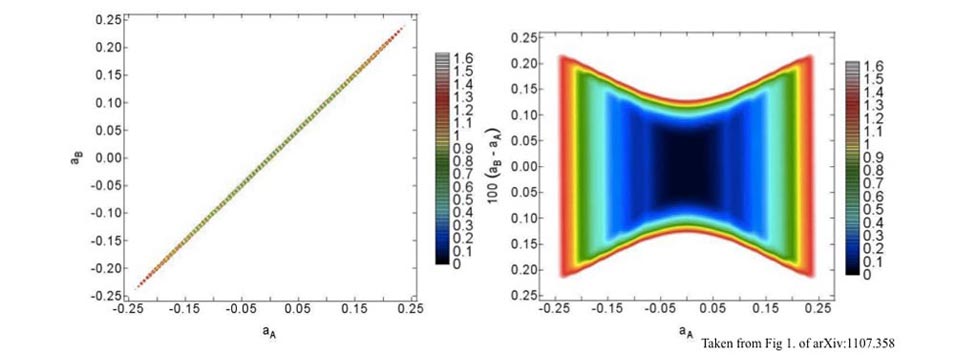

An important part of this lack of understanding of masses asks why all of the mass or distance scales associated with the Standard Model are so different from the scale that appears in general relativity. For example, a representative scale in the Standard Model is given by the Fermi constant, GF = (300 GeV)-2, which is a measure of the strength of the weak interactions. The corresponding quantity for general relativity is Newton’s constant, which in the same units is GN = (2 x 1019 GeV)-2. The enormous hierarchy between these two numbers quantifies how much weaker gravity is than the weak interactions, and is responsible for why astrophysical objects (like the Sun) are so much larger than are the objects of everyday life.

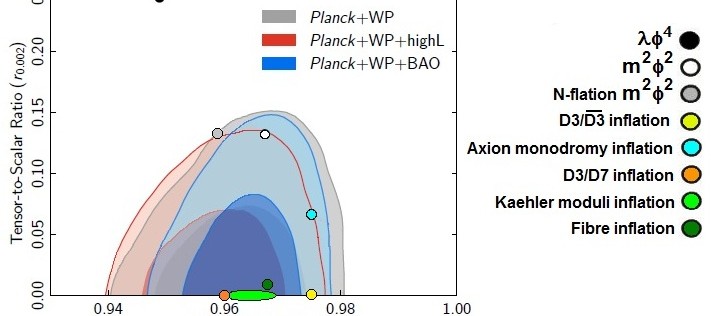

Much thought has been put into how the Standard Model might be modified to understand these puzzles. The vast majority of these fall into one or more of three broad classes of proposals. Compositeness: that some of the known particles might be composites of still-smaller things; supersymmetry: that a new symmetry might exist that relates particles like quarks and leptons (fermions) to the particles associated with new forces (bosons); extra dimensions: or that there may be more than the standard three dimensions to space (a likely possibility in string theory), and that some of these are large enough to be seen in high-energy accelerators.